Measurement is a curious thing. Everyone is talking about it. A lot of people are doing it. With all that talk and all that measurement, it causes even more people to do it. I mean, it must be important, right?

Contagious shooting.

Offsides. They jumped first. We all jumped.

Strong words. Actions. Open doors. Imply permission.

Just don't.

Buzz. Some are testing because everyone else is doing it and they do not know why they are doing it themselves. It is contagious and it is killing us.

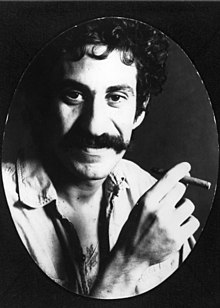

There are a few problems that are typical of assessment culture. They differ in severity. Jim Croce used to talk about causing trouble in Chester, PA where they would cut you four kinds of bad: long, deep, wide, and often. Who would think that I would ever talk about assessment and Jim Croce in the same paragraph? I like Jim Croce. I like assessment. I do not like the abuse of assessment and data. It gives an essential part of education a bad name. Yes I said essential. When it is done well and done correctly, it is good stuff. Don’t mess around with Jim. Errr, Slim.

The effect of non-educational and non-assessment pertinent information on our students is referred to in the testing field as “unintended consequences.” We need to consider them. Seriously.

1. Long

Rather, length. Any assessment that causes the facilitation of learning to be interrupted is too often long and disruptive. It is important for the assessor to consider what is actually being tested when distributing a test with 120 items. My first assumption is that they are assessing attention and fortitude. Stubborn constitution? While it may be clear to the test creator that each of these items has a clear purpose, I pose this question–has the need for coverage been obscured by the other challenges that are presented before those questions are asked?

The length of a test is one of the first things that a student checks when it is delivered to their desk. Flip through and count the pages. See if they are multiple choice, short answer, long answer, or essay. Matching? True/False?

2. Deep

It is often that the coverage of an assessment is haphazardly accounted for by length. Oops. Depth of an assessment can be addressed with a shovel or with a scalpel. Do you investigate like an excavator or a surgeon? While there are several questions that could get to the bottom of a student’s understanding of a novel, mathematical concept, or science theory; is it possible that there is one question that could be used to demonstrate understanding of the larger constructs? The answer is usually yes and the rationale that is usually defended is depth.

When quality inquiry includes necessary skills, we begin to scratch the surface of depth. A complex skill often trumps less complex skills–not always the case but often the case. You have heard the saying, “You have to crawl before you can walk.” If your child went straight to walking without crawling, would you intervene? Neither did I. It is not a completely common phenomenon but it happens and aside from toddler locomotion, there are not really any good reasons to crawl. Either way, I would bet that you could learn it later.

3. Wide

Breadth of assessment is key. It probably means something completely different than what you think, though. The wideness of assessment does not have to include every aspect of what is being taught. Variations in the width of coverage is often a factor of interests that our students have that either narrow or widen their take on the material. That is fine.

As educators, we must widen our assessment in order to provide every opportunity for our students to demonstrate competence and understanding. If this goal is achieved by one student through a presentation and another through various and typical testing procedures, why should that prove an issue?

BECAUSE in college, they will get scantron tests so they need to get used to it!

That is not a reasonable answer. I do not care who says so, either.

If you want students to get better at scantron tests because that is what they will encounter, that is fine and in some contexts a reasonable goal. There is, however, no reason to restrict a student in their demonstration of competence and understanding in the process.

You will often hear about differentiated instruction but I am telling you that the need for citizens of our age is to be given the opportunity for differentiated assessment. You see, differentiated instruction is an appealing concept but its goals are achievement on typical assessment procedures–if you teach them differently, they will all be able to achieve in the same way!

No. The teaching is probably not the issue. I will put myself out there and say that you are probably a good enough teacher. It is more likely that a student is capable of demonstrating understanding in a way that is not being assessed.

If I hear “they know the material but they cannot pass the test” one more time I will scream. Twice.

4. Often

The frequency of assessment is not simply a problem of testing too often. It is also a problem of failing to assess often enough. Notice that I am referring to testing specifically and assessment in general. The “movement” to get rid of testing and assessment and measurement and grades aside, please understand that we are constantly assessing, judging, and measuring. After all that we act, stop acting, or change the methods and manner of acting to meet the assessed need. If you want to attach a letter, number, or whatever to that–fine. Do not think that by doing away with scantrons, letters, and numbers that you have put the assessment monster out of the room. It is still there. Boo.

Ecological validity is an issue that is at the forefront of research these days. Developing methods of observation and assessment that fit and make sense in the context of the learning scenario can be challenging. Is it possible to measure some aspect of learning within the context of that learning using tasks that make sense within that domain? If not, that is an issue of ecological validity. Think about the NFL combines that are used by professional teams to assess the viability of future draft picks. These sets of skills are supposed to be indicators of the athletes’ ability to do well in an NFL game. We know, though, that the only way to know if an athlete is capable of playing professional football is to have them play professional football. Ecological validity.

Slightly more than a rant.

Pt. 1 coming soon!

Find out where it’s at.

Not hustlin’ people strange to you.

Even if you do have a 2-piece custom-made pool cue.

Valid points that everyone should think about more often – looking foward to reading part 1.